Personalization isn't settings memory. The next leap is your personal agent walking into the vehicle with you.

My AI agent knows what time I am supposed to take my morning supplements, which piece of writing I have been stuck on for three days, who I am meeting at nine, why I am stressed about it, which restaurants I keep going back to, and which intersections I avoid driving through because I once watched something happen there I would rather not watch again. It has my calendar, my health stack, the doctors I trust, the engineers I work with, the small running list of decisions and habits and constraints that pile up over time into the shape of who I actually am. I built this agent. I have been talking to it for long enough that it has a working model of how I think.

Then I get into my car, and the car remembers where I like the seat.

That gap is the next big shift in cabin experience, and most of the industry is still trying to close it from the wrong side.

After six years at Lucid Motors running connected vehicle systems and the data platform behind them, I watched the industry try to solve personalization by making the vehicle smarter. Better infotainment. More natural voice. A familiar Android interface. A friendlier dashboard. All of it real progress, none of it the right shape. The car is not the personalization layer. The car is the safety layer. The personalization belongs somewhere else, and the something else is already in my pocket.

The automotive industry has spent years labeling things "personalized" that are really just settings memory dressed up in better language. Seat profile, climate preset, mirror angle, the radio station I picked last Tuesday, an ambient lighting color I forgot I chose. Useful, in the same way an alarm clock is useful. Not deep.

Take Tesla, which is the most aggressively software-defined car on the road right now. Tesla's self-driving personality is one of five fixed buckets: Sloth, Chill, Standard, Hurry, Mad Max. I pick one. The car uses it until I pick a different one. Most people land on Chill or Standard and never touch the setting again. That is not personalization. That is a five-position switch with marketing on top.

The current AI wave inside vehicles is the better-voice-assistant wave. Google is rolling Gemini into roughly four million eligible vehicles with Google built-in. Mercedes is wiring Google Cloud's automotive AI agent into MBUX. Volkswagen has ChatGPT in IDA through Cerence. A meaningful number of conversations this year between OEMs, the cloud platforms, and the cabin-tech ecosystem center on bringing Gemini into the dashboard, the familiar Android interface, and access to the automotive app store. That work matters. A natural-language voice interface is better than memorizing rigid commands. A familiar Android display is better than the clunky infotainment most cabins shipped with for the last decade.

But none of that is personalization. It is a smarter assistant inside the car. The intelligence still lives in the vehicle. The memory still resets between drivers in any way that actually counts. And the voice on the other end of the conversation still has to learn me from scratch, every time, on every car.

CarPlay solved a different problem, and that is why it won. The car was not where my digital life lived. My phone was. CarPlay projected the familiar world of my phone into the unfamiliar cabin, and drivers started judging the cabin by how well it got out of the way of the phone. The lesson was supposed to be: drivers want the vehicle to recognize the digital world they already live in. The industry treated it as a UI problem. It was not a UI problem. It was an identity problem.

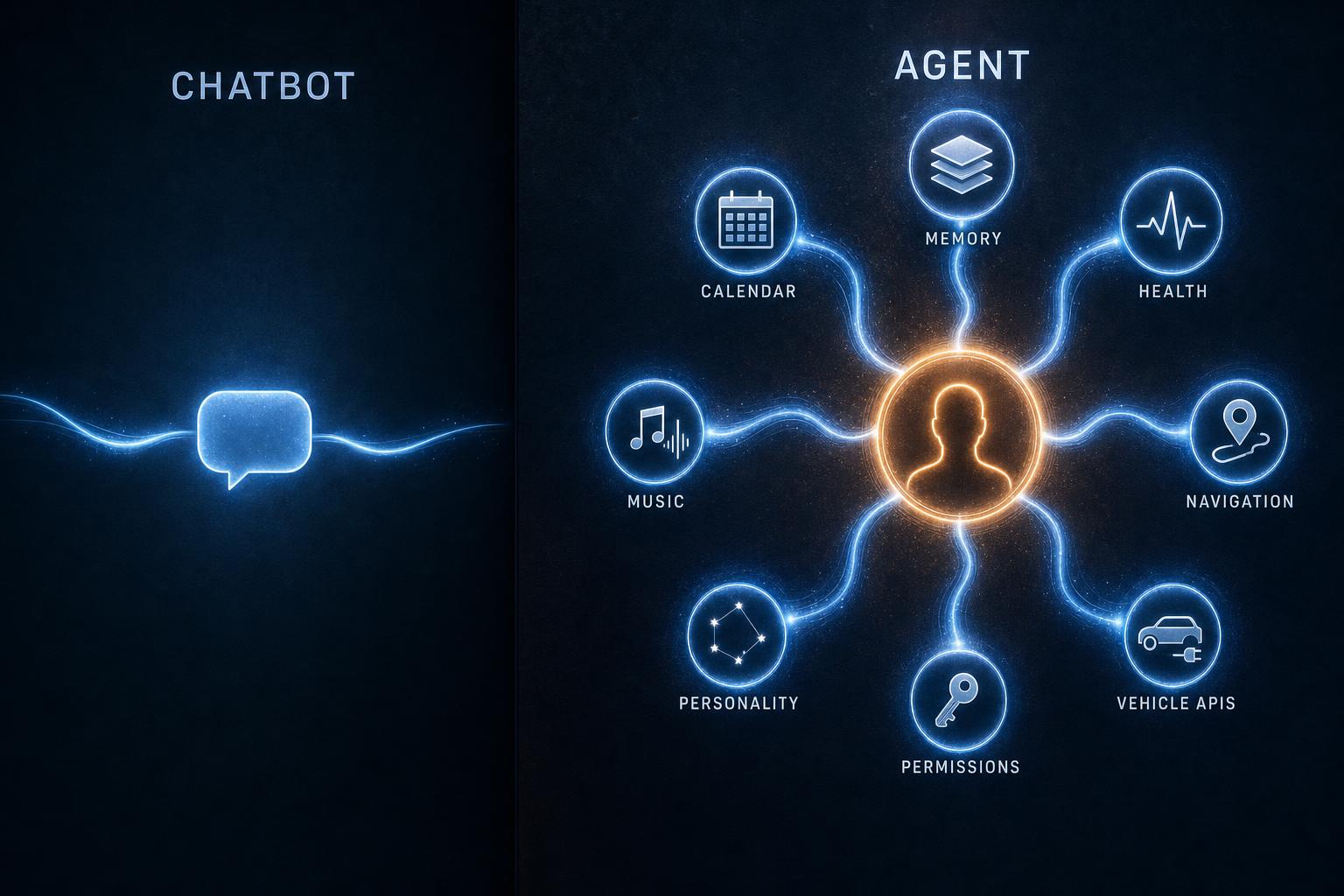

A chatbot answers questions. An agent does work. The difference is not vocabulary, it is the layers underneath. A chatbot is a large language model with a system prompt. An agent is a model wrapped in memory, instructions, tools, permissions, routines, context, and access to trusted data. A chatbot can tell me the weather. An agent knows I have a 9:15 with someone I find draining, sees from my sleep tracker that I slept four hours, sees from my calendar that I have three more meetings stacked after it, knows from a year of pattern that I usually want silence in the car before that kind of morning. By the time I climb in, the route is set to least-stressful instead of fastest, the cabin is two degrees cooler than my default, the ambient lighting is dim and warm, and incoming calls are silenced unless my significant other is calling.

The industry knows what a chatbot is. The industry is still working out what an agent is. The two are not interchangeable, and conflating them is most of why the cabin experience still feels generic even when the voice on the dashboard gets better.

The shape of the next layer is not complicated. My agent authenticates into the vehicle. The vehicle exposes a permissioned set of APIs and tells the agent what it can and cannot influence. The agent receives the relevant context: trip, vehicle state, cabin capabilities, route, weather, time constraints, safety envelope. Then the agent can request changes.

The key word is request. The agent does not control braking, steering, locks, or ADAS behavior outside vehicle-defined limits. The vehicle stays sovereign on safety, regulation, homologation, and final authority. Climate, media, cabin lighting, comfort, route style, charging logistics, service handling, the cadence of how the car asks me questions: that is where personalization actually lives, and that is where the agent operates.

The agent asks. The vehicle decides.

That split is what makes this sane. The agent understands the human. The vehicle understands the machine, the road, the law, and the safety envelope. When the two cooperate, personalization stops being a list of saved settings and becomes an experience that walks in with the driver and walks out with them.

Once the cabin is a host instead of a gatekeeper, the surface shifts.

The seat does not adjust to a saved position. It adjusts to trip length, recent back pain, whether I worked out this morning, whether I am about to spend three hours on the highway, whether someone is sitting in the passenger side. Climate does not snap to a preset. It learns that I want the cabin cooler before work calls and warmer after evening workouts. Music stops being a playlist selector and becomes a context engine: silence before a hard meeting, calm music after a long day, podcasts when I am mentally fresh, no content at all when I need to decompress.

Navigation moves past fastest route. Some days I want fastest. Some days least stressful. Some days a charging stop with decent coffee and a quiet place to take a call. Some days, if I happen to be in the kind of geography where this is possible, a scenic route because I need the extra twenty minutes to decompress before I get to where I am going. The vehicle's cameras can read fatigue, stress, or motion sickness with opt-in sensors, and the agent can shift the cabin tone accordingly: dim the lighting, lower the volume, soften how it asks me questions.

Service changes too. If the car detects an issue, my agent translates the warning into plain language, checks urgency against my schedule, books the appointment, and sends the diagnostic logs with the permissions I authorized. Not a notification on a screen. A handled task.

That is personalization. Not a saved profile, not a better wake word, not a prettier dashboard. A vehicle that recognizes the person who just got in and helps them live better while they are inside it.

One more thing is worth naming. The agent is not just bringing context into the cabin. It is also carrying context back out. We spend serious hours in cars, two or more on a heavy commute day, and a long drive is one of the best places to think, to brainstorm a problem out loud, or to come back from a hard meeting and unpack it with the agent while it is still fresh. A near-miss at an intersection, a bumpy stretch of road, the breakdown on the side of the highway when nobody else is around, the route I now avoid because of what I once watched happen there: all of it lands in the agent's memory. The car becomes one more environment my agent learns me in. The things that happen inside it follow me back into the rest of my life.

The limitation is not imagination. There are plenty of smart people inside legacy automotive who can see this coming, and many of them have been arguing for it internally for years. The limitation is software leverage.

Cars are not phones. They are safety-critical machines built from many domains assembled at the end: infotainment, body, chassis, ADAS, powertrain, battery, HVAC, telematics, diagnostics. Most of those domains live behind supplier-owned components and integration layers that were never designed to expose clean, real-time, user-level personalization APIs. The software-defined vehicle programs that the industry has been talking about for the last five years exist precisely to fix this, and they are running into delays, budget pressure, and architectural debt across most of the incumbent OEMs.

The companies better positioned for the agent layer are the ones that did not inherit a supplier-stitched architecture. Tesla, Rivian, the more software-native Chinese OEMs that built the platform vertically integrated from day one. Volvo and BMW have made real progress on the architectural side. Most of the rest of the industry is still trying to retrofit the software layer onto a vehicle stack that was designed around hardware contracts.

The agent layer will not arrive evenly. It will arrive first on the vehicles that have a clean platform to host it.

Sparked by a recent Discover Tomorrow gathering at the Japan Innovation Campus on intelligent in-car experiences. Thanks to Mark Wilcox, Nader Ghaffari, Christian Litsch, Stefano Marzani, and the rest of the panel for the kind of room that lets these ideas connect.

I work on this layer at TelemetryLab, the company I am building, which sits in the data and AI plumbing between vehicles and the agents that will eventually run on top of them.